SCARS Institute’s Encyclopedia of Scams™ Published Continuously for 25 Years

The FBI Issues A Warning About Synthetic Content

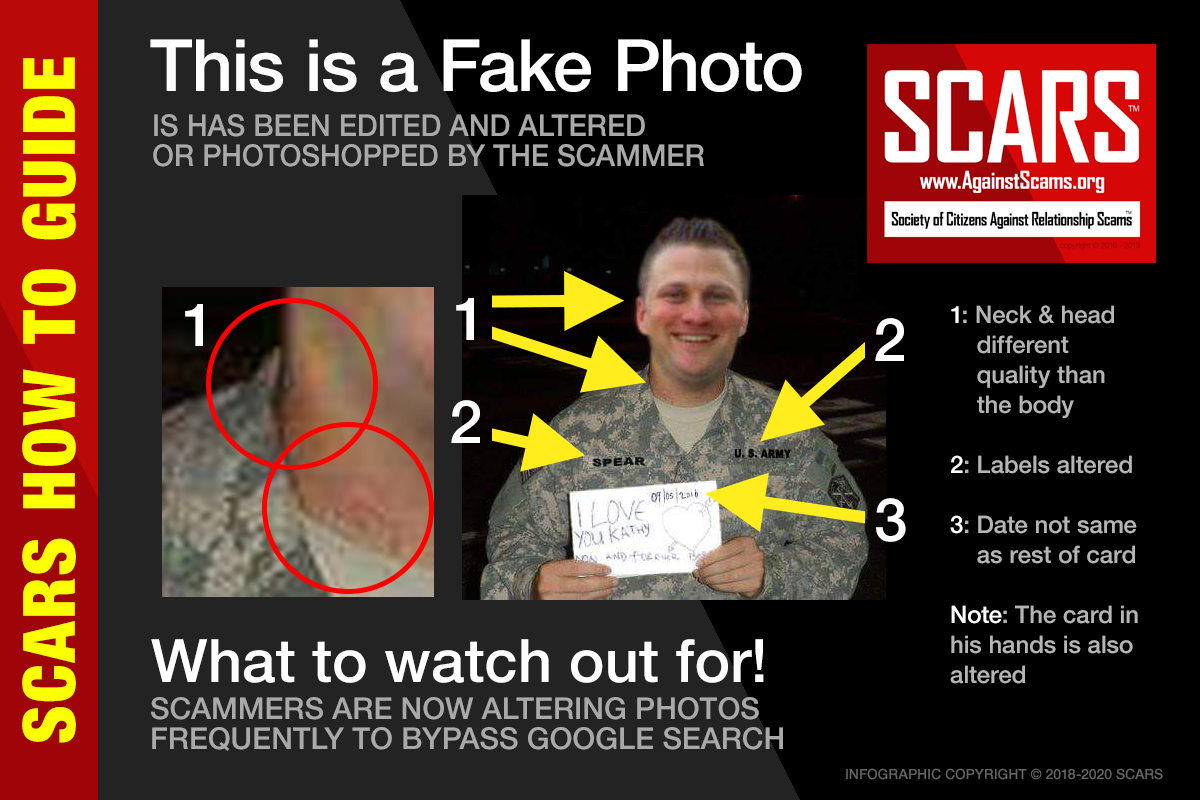

Helping You Spot Fake Altered or Photoshopped Images

Synthetic Content Now Represents A Significant Threat!

Malicious Actors Almost Certainly Will Leverage Synthetic Content for Cyber and Foreign Influence Operations

Malicious actors almost certainly will leverage synthetic content for cyber and foreign influence operations in the next 12-18 months.

Foreign actors are currently using synthetic content in their influence campaigns, and the FBI anticipates it will be increasingly used by foreign and criminal cyber actors for spearphishing and social engineering in an evolution of cyber operational tradecraft.

Explaining Synthetic Content

The FBI defines synthetic content as the broad spectrum of generated or manipulated digital content, which includes images, video, audio, and text. While traditional techniques like Photoshop can be used to create synthetic content, this report highlights techniques based on artificial intelligence (AI) or machine learning (ML) technologies. These techniques are known popularly as deepfakes or GANs (generative adversarial networks). Generally, synthetic content is considered protected speech under the First Amendment. The FBI, however, may investigate malicious synthetic content which is attributed to foreign actors or is otherwise associated with criminal activities.

Recent and Anticipated Uses of Synthetic Content

Since late 2019, private sector researchers have identified multiple campaigns which have leveraged synthetic content in the form of ML-generated social media profile images.

Additionally, advances in AI- (artificial intelligence-based) and ML-based (machine learning-based) content generation and manipulation technologies likely could be used by malicious cyber actors to advance tradecraft and increase the impact of their activities. ML-generated profile images may help malicious actors spread their narratives, increasing the likelihood they will be more widely shared, making the message and messenger appear more authentic to consumers.

- Russian, Chinese, and Chinese-language actors are using synthetic profile images derived from GANs, according to multiple private-sector research reports. These profile images are associated with foreign influence campaigns, according to the same sources.

- Since 2017, unknown actors have created fictitious “journalists” who generated articles that were unwittingly published and amplified by a variety of online and print media outlets, according to press reports. These falsified personas often have a seemingly robust online presence, including the use of GANs profile images, however, basic fact checks can quickly reveal that the profiles are fraudulent.

Currently, individuals are more likely to encounter information online whose context has been altered by malicious actors versus fraudulent, synthesized content. This trend, however, will likely change as AL and ML technologies continue to advance

We anticipate malicious cyber actors will use these techniques broadly across their cyber operations—likely as an extension of existing spearphishing and social engineering campaigns, but with more severe and widespread impact due to the sophistication level of the synthetic media used.

- Malicious cyber actors may use synthetic content to create highly believable spearphishing messages or engage in sophisticated social engineering attacks, according to a late 2020 joint research report.

Synthetic content may also be used in a newly defined cyber-attack vector referred to as Business Identity Compromise (BIC). BIC will represent an evolution in Business Email Compromise (BEC) tradecraft by leveraging advanced techniques and new tools. Whereas BEC primarily includes the compromise of corporate email accounts to conduct fraudulent financial activities, BIC will involve the use of content generation and manipulation tools to develop synthetic corporate personas or to create a sophisticated emulation of an existing employee.

This emerging attack vector will likely have very significant financial and reputational impacts to victim businesses and organizations.

How to Identify and Mitigate Synthetic Content

- Visual indicators such as distortions, warping, or inconsistencies in images and video may be an indicator of synthetic images, particularly in social media profile avatars (profile images). For example, distinct, consistent eye spacing and placement across a wide sample of synthetic images provide one indicator of synthetic content.

- Similar visual inconsistencies are typically present in the synthetic video, often demonstrated by noticeable head and torso movements as well as syncing issues between face and lip movement, and any associated audio.

- Third-party research and forensic organizations, as well as some reputable cybersecurity companies, can aid in the identification and evaluation of suspected synthetic content.

- Finally, familiarity with media resiliency frameworks like the SIFT methodology can help mitigate the impact of cyber and influence operations.

The “SIFT” methodology encourages individuals to Stop, Investigate the source, Find trusted coverage, and Trace the original content when consuming information online.

Individuals and organizations can lower the risk of becoming victims to malicious actors using synthetic content by adopting good cyber hygiene and other security measures to include the following tips.

- Be aware of the potential for cyber or foreign influence activities using synthetic content. Be alert when consuming information online, particularly when topics are especially divisive or inflammatory;

- Seek multiple, independent sources of information;

- Do not assume an online persona or individual is legitimate based on the existence of video, photographs, or audio on their profile;

- Seek media literacy or media resiliency resources like SIFT, as well as training to harden individuals and corporate interests from the potential effects of influence campaigns;

- Use multi-factor authentication on all systems, especially on shared corporate social media accounts;

- Train users to identify and report attempts at social engineering and spearphishing which may compromise personal and corporate accounts;

- Establish and exercise communications continuity plans in the event social media accounts are compromised and used to spread synthetic content;

- Do not open attachments or click links within emails received from senders you do not recognize;

- Do not provide personal information, including usernames, passwords, birth dates, social security numbers, financial data, or other information in response to unsolicited inquiries;

- Be cautious when providing sensitive personal or corporate information electronically or over the phone, particularly if unsolicited or anomalous. Confirm, if possible, requests for sensitive information through secondary channels;

- Always verify the web address of legitimate websites and manually type them into your browser.

The FBI’s Protected Voices initiative provides additional tools and resources to companies, individuals, and political campaigns to protect against online foreign influence operations and cybersecurity threats.

Reporting Notice

The FBI encourages readers to report information concerning suspicious or criminal cyber activity to their local FBI field office. Additionally, corporations, individuals, or other entities who believe they are the target of foreign influence actors or other malign foreign entities are encouraged to contact their local FBI Field Office. Field office contacts can be identified at www.fbi.gov/contact-us/field-offices.

When available, each report submitted should include the date, time, location, type of activity, number of people, and type of equipment used for the activity, the name of the submitting company or organization, and a designated point of contact.

Administrative Note

Additional Resources

- 1 Report | Graphika | “IRA Again: Unlucky Thirteen” | 1 September 2020 | https://graphika.com/reports/ira-again-unlucky-thirteen/ | accessed on 2 September 2020.

- 2 Report | Graphika | “Step into My Parler” | 1 October 2020 | https://graphika.com/reports/step-into-my-parler/ | accessed on 3 October 2020.

- 3 Report | Graphika | “Operation Naval Gazing” | 22 September 2020 | https://graphika.com/reports/operation-naval-gazing/ | accessed on 23 September 2020.

- 4 Report | Graphika | “Spamouflage Goes to America” | 12 August 2020 |

https://graphika.com/reports/spamouflage-dragon-goes-to-america/ | accessed on 13 August 2020. - 5 Report | Graphika and DFRLab | “#OperationFFS: Fake Face Swarm” | 20 December 2019 |

https://graphika.com/reports/operationffs-fake-face-swarm/ | accessed on 23 December 2019. - 6 News Article | Buzzfeed | “The Independent Used A Journalist Who Doesn’t Exist On A Football Report from Cyprus.” | 16 October 2017 | https://www.buzzfeed.com/markdistefano/the-independent-used-a-journalist-who-doesnt-exist-on-a | accessed on 17 February 2020.

- 7 News Article | Reuters | “Deepfake used to attack activity couple shows new disinformation frontier” | 15 July 2020 | https://www.reuters.com/article/us-cyber-deepfake-activist/deepfake-used-to-attack-activist-couple-shows-new-disinformation-frontier-idUSKCN24G15E | accessed 17 February 2020.

- 8 Report | Trend Micro Research | “Malicious Uses and Abuses of Artificial Intelligence†| 19 November 2020 | https://europol.europa.eu/publications-documents/malicious-uses-and-abuses-of-artificial-intelligence/ | accessed on 20 November 2020.

- 9 Blog Article | Infodemic.blog | “Sifting through the Pandemic†| 2021 | https://infodemic.blog | accessed on 17 February 2021.

TAGS: SCARS, Information About Scams, Anti-Scam, Scams, Scammers, Fraudsters, Cybercrime, Crybercriminals, Scam Victims, Online Fraud, Online Crime Is Real Crime, Scam Avoidance, Synthetic Content, Altered Images, Photoshopped Images, Fake Photos, FBI Warning

PLEASE SHARE OUR ARTICLES WITH YOUR FRIENDS & FAMILY

HELP OTHERS STAY SAFE ONLINE – YOUR KNOWLEDGE CAN MAKE THE DIFFERENCE!

THE NEXT VICTIM MIGHT BE YOUR OWN FAMILY MEMBER OR BEST FRIEND!

By the SCARS™ Editorial Team

Society of Citizens Against Relationship Scams Inc.

A Worldwide Crime Victims Assistance & Crime Prevention Nonprofit Organization Headquartered In Miami Florida USA & Monterrey NL Mexico, with Partners In More Than 60 Countries

To Learn More, Volunteer, or Donate Visit: www.AgainstScams.org

Contact Us: Contact@AgainstScams.org

-/ 30 /-

What do you think about this?

Please share your thoughts in a comment below!

Table of Contents

LEAVE A COMMENT?

Recent Comments

On Other Articles

- Scott V. on The Persistence of Danielle Delaunay as a Fake Identity – 2026: “That was the woman they used with me!! They were able to send a video of her talking on one…” Jun 2, 16:02

- on The Persistence of Danielle Delaunay as a Fake Identity – 2026: “That’s sad but they do a lot” Jun 2, 14:39

- on Major General William Burke Garrett III – Another Stolen Identity Used To Scam Women: “I am from Guatemala. I was contacted by William Barret two weeks ago, who mentioned that he was a widower…” May 21, 17:13

- on Scam Grooming: Finding Common Interests: “I’ve been groomed for two months then the love bombing started. This guy was supposedly a Ukrainian soldier stationed in…” May 8, 13:56

- on Revictimization – A High Risk for Existing Scam Victims – 2026: “I was just very numb after my scam, I barely slept and was exhausted. I wish I had know about…” May 7, 22:31

- on Revictimization – A High Risk for Existing Scam Victims – 2026: “I can barely handle myself these days so even thinking about another relationship is too much for me. The time…” May 7, 20:59

- on Revictimization – A High Risk for Existing Scam Victims – 2026: “Thank you! I understand that these impulse reactions can affect us for some time. And another relationship especially soon after…” May 7, 18:14

- on Reporting Scams To The United States Secret Service – Cryptocurrency Recovery – Forget The FBI! [VIDEO]: “Unfortunately, the Federal services tend to aggregate crimes together rather than investigate individual offenses when it comes to scams.” May 6, 01:57

- on Romance Scams Detection Training Tool – 2026: “Criminals are not toys, and they can harm you in ways you can’t even imagine.” May 6, 01:55

- on Romance Scams Detection Training Tool – 2026: “Great, word, by word as it is happening to me right now however I already stated clearly that I have…” May 2, 20:06

ARTICLE META

Important Information for New Scam Victims

- Please visit www.ScamVictimsSupport.org – a SCARS Website for New Scam Victims & Sextortion Victims

- Enroll in FREE SCARS Scam Survivor’s School now at www.SCARSeducation.org

- Please visit www.ScamPsychology.org – to more fully understand the psychological concepts involved in scams and scam victim recovery

If you are looking for local trauma counselors please visit counseling.AgainstScams.org or join SCARS for our counseling/therapy benefit: membership.AgainstScams.org

If you need to speak with someone now, you can dial 988 or find phone numbers for crisis hotlines all around the world here: www.opencounseling.com/suicide-hotlines

A Note About Labeling!

We often use the term ‘scam victim’ in our articles, but this is a convenience to help those searching for information in search engines like Google. It is just a convenience and has no deeper meaning. If you have come through such an experience, YOU are a Survivor! It was not your fault. You are not alone! Axios!

A Question of Trust

At the SCARS Institute, we invite you to do your own research on the topics we speak about and publish, Our team investigates the subject being discussed, especially when it comes to understanding the scam victims-survivors experience. You can do Google searches but in many cases, you will have to wade through scientific papers and studies. However, remember that biases and perspectives matter and influence the outcome. Regardless, we encourage you to explore these topics as thoroughly as you can for your own awareness.

Statement About Victim Blaming

SCARS Institute articles examine different aspects of the scam victim experience, as well as those who may have been secondary victims. This work focuses on understanding victimization through the science of victimology, including common psychological and behavioral responses. The purpose is to help victims and survivors understand why these crimes occurred, reduce shame and self-blame, strengthen recovery programs and victim opportunities, and lower the risk of future victimization.

At times, these discussions may sound uncomfortable, overwhelming, or may be mistaken for blame. They are not. Scam victims are never blamed. Our goal is to explain the mechanisms of deception and the human responses that scammers exploit, and the processes that occur after the scam ends, so victims can better understand what happened to them and why it felt convincing at the time, and what the path looks like going forward.

Articles that address the psychology, neurology, physiology, and other characteristics of scams and the victim experience recognize that all people share cognitive and emotional traits that can be manipulated under the right conditions. These characteristics are not flaws. They are normal human functions that criminals deliberately exploit. Victims typically have little awareness of these mechanisms while a scam is unfolding and a very limited ability to control them. Awareness often comes only after the harm has occurred.

By explaining these processes, these articles help victims make sense of their experiences, understand common post-scam reactions, and identify ways to protect themselves moving forward. This knowledge supports recovery by replacing confusion and self-blame with clarity, context, and self-compassion.

Additional educational material on these topics is available at ScamPsychology.org – ScamsNOW.com and other SCARS Institute websites.

Psychology Disclaimer:

All articles about psychology and the human brain on this website are for information & education only

The information provided in this article is intended for educational and self-help purposes only and should not be construed as a substitute for professional therapy or counseling.

While any self-help techniques outlined herein may be beneficial for scam victims seeking to recover from their experience and move towards recovery, it is important to consult with a qualified mental health professional before initiating any course of action. Each individual’s experience and needs are unique, and what works for one person may not be suitable for another.

Additionally, any approach may not be appropriate for individuals with certain pre-existing mental health conditions or trauma histories. It is advisable to seek guidance from a licensed therapist or counselor who can provide personalized support, guidance, and treatment tailored to your specific needs.

If you are experiencing significant distress or emotional difficulties related to a scam or other traumatic event, please consult your doctor or mental health provider for appropriate care and support.

Also read our SCARS Institute Statement about Professional Care for Scam Victims – click here to go to our ScamsNOW.com website.

Thank you for your comment. You may receive an email to follow up. We never share your data with marketers.